NVIDIA just turned the tables on AI hardware with the DGX Spark, an AI supercomputer in a package that you can carry under your arm. Shipping starts this week and puts raw power right where developers need it most – in their own workspaces.

Shipping begins October 15 from NVIDIA’s website, with partner models from Acer, ASUS, Dell, GIGABYTE, HP, Lenovo, and MSI. They will also be available in Micro Center locations in the United States, as well as through NVIDIA’s global channel partners. The base unit will cost roughly $3,000, according to the business. That allows solo coders, small teams, and even students to dive into intensive AI development without breaking the bank or the grid.

KAMRUI GK3Plus Mini PC, 16GB RAM 512GB M.2 SSD Mini Computers,12th Alder Lake N95 (up to 3.4GHz) Micro…

- 【NEW GENERATION CPU-N95】–Newest 12th Alder Lake N95 (1.7GHz, MAX TO 3.4GHz, 4x cores, 6MB L3 Cache) processor (2025 New Releases). Compared with…

- 【16GB RAM 512GB SSD UP TO 2TB】–KAMRUI mini pc with high-speed 16GB DDR4, Built-in 512GB M.2 2280 SSD.16GB of RAM memory makes your entire system…

- 【SMALL BUT POWERFUL PC】–MINI PC Silver Series has a great texture. The mini computer measures only 5.1 in * 5.1 in * 1.96 in, you can be easily…

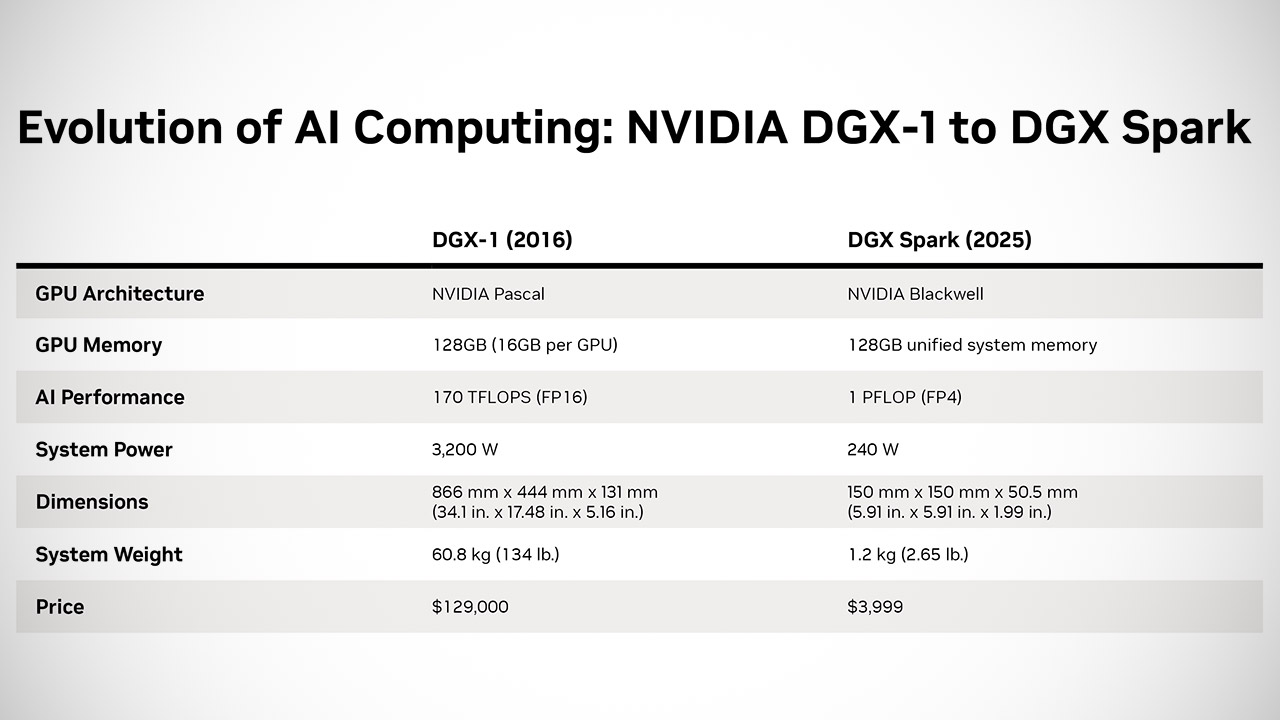

The DGX Spark has NVIDIA’s Grace Blackwell architecture, with GPUs, CPUs, high-speed networking and a full suite of AI tools in one package. A single GB10 Grace Blackwell Superchip powers it all, with ConnectX-7 networking at 200 gigabits per second and NVLink-C2C for super fast data transfer between components. That adds up to 128 gigabytes of shared memory – coherent across CPU and GPU – and 4 terabytes of NVMe storage for your datasets. Plug it into a wall socket and it sips power like a desktop, not a server farm.

The figures are impressive: one petaflop for AI work, or enough to perform a billion calculations per second. Developers can perform full inference on models with up to 200 billion parameters or fine-tune those with up to 70 billion, all locally. That implies fine-tuning huge language models or vision systems without sending data offsite. NVIDIA preloads it with AI software from the start, including CUDA libraries, NIM microservices, and ecosystem goodies such as pre-built models. When you turn it on, you can customize Black Forest Labs image generators, create search agents using the Cosmos Reason model, and generate chatbots that are hardware-specific.

Anaconda, Cadence, Docker, Google, Hugging Face, JetBrains, Meta, Microsoft, Ollama, and Roboflow are among the first to receive units and begin tweaking their software to perform quicker on Spark. Third parties jumped in quickly, with ASUS, Dell, and HP creating customized versions of the design. The Spark has a bigger brother, the Station, but details are yet unknown.